Future Token Prediction Model FTP: A New AI Training Method for Transformers that Predicts Multiple Future Tokens

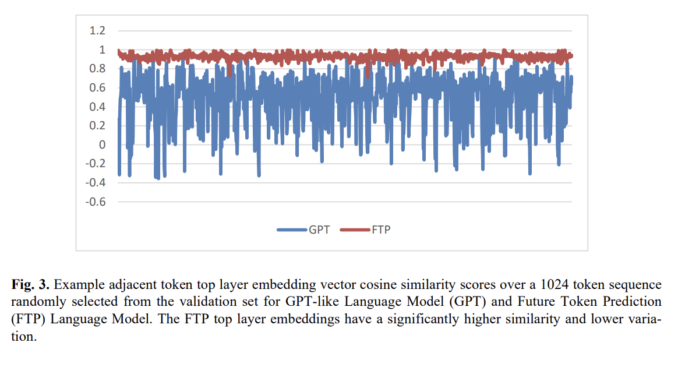

The current design of causal language models, such as GPTs, is intrinsically burdened with the challenge of semantic coherence over longer stretches because of their one-token-ahead prediction design. This has enabled significant generative AI development […]